Word Clouds

After preprocessing, the most frequent words in each class are visualized. The size of each word is proportional to its frequency in that class's corpus.

Word clouds, train/test splitting, four classification models, SMOTE oversampling, and a full performance comparison before and after SMOTE.

After preprocessing, the most frequent words in each class are visualized. The size of each word is proportional to its frequency in that class's corpus.

The dataset is split into a training set (80%) used to fit the models, and a test set (20%) held out for unbiased evaluation. Stratification ensures the spam/valid ratio is preserved in both splits.

Training Set

4,457

80% · used to fit models

Test Set

1,115

20% · held out for evaluation

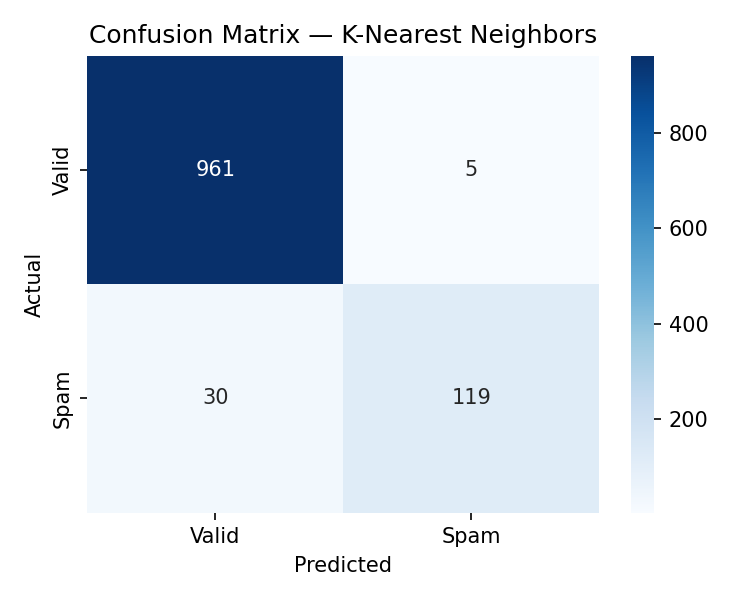

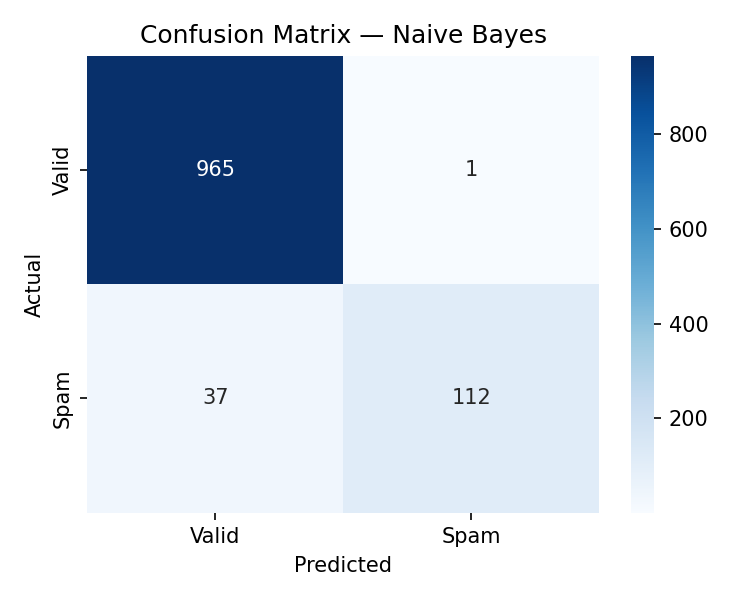

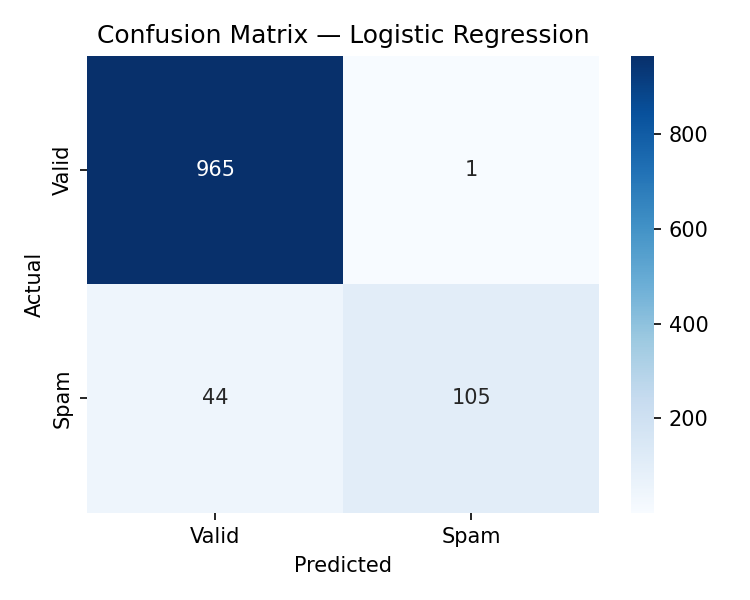

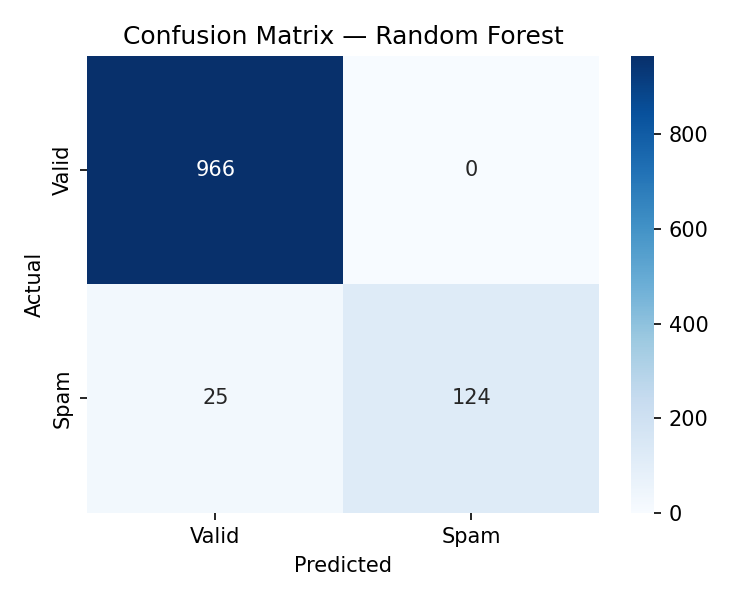

Four classifiers are trained on the original imbalanced training set. Each model is evaluated on the held-out test set with a confusion matrix.

Classifies by majority vote of the 5 nearest training examples, measured by cosine similarity between TF-IDF vectors.

Confusion Matrix

Applies Bayes' theorem assuming feature independence. Naturally suited for text classification with TF-IDF counts.

Confusion Matrix

Learns a linear decision boundary in the TF-IDF feature space, outputting the probability of spam membership.

Confusion Matrix

Trains 100 independent decision trees and aggregates their votes. Robust to overfitting and handles high-dimensional text well.

Confusion Matrix

The training set contains only 13.4% spam messages. Models trained on imbalanced data tend to favor the majority class (valid), resulting in poor spam recall. SMOTE (Synthetic Minority Over-sampling Technique) resolves this by generating synthetic spam samples in the feature space.

Before SMOTE

Heavily imbalanced — 20% spam

After SMOTE

Balanced — 50/50 split

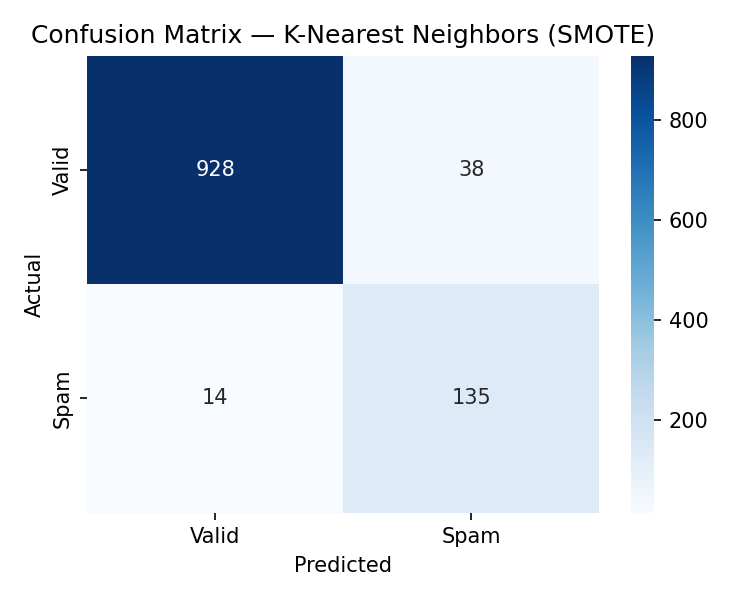

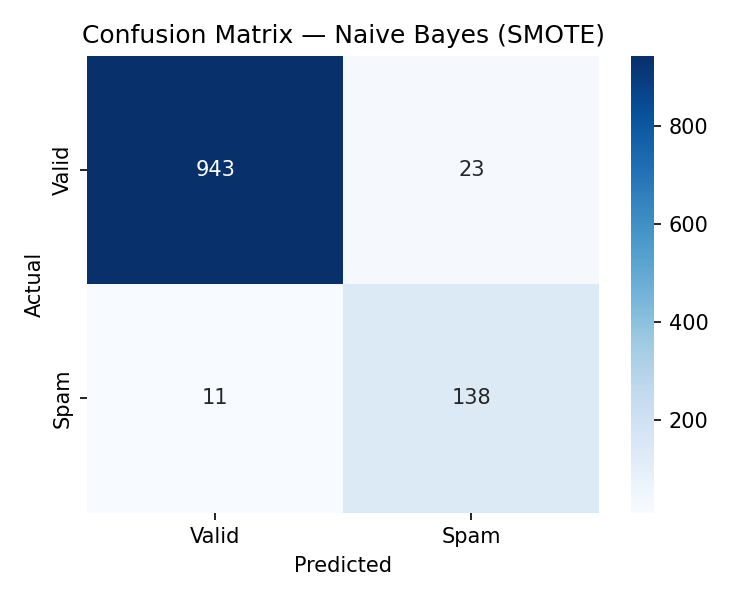

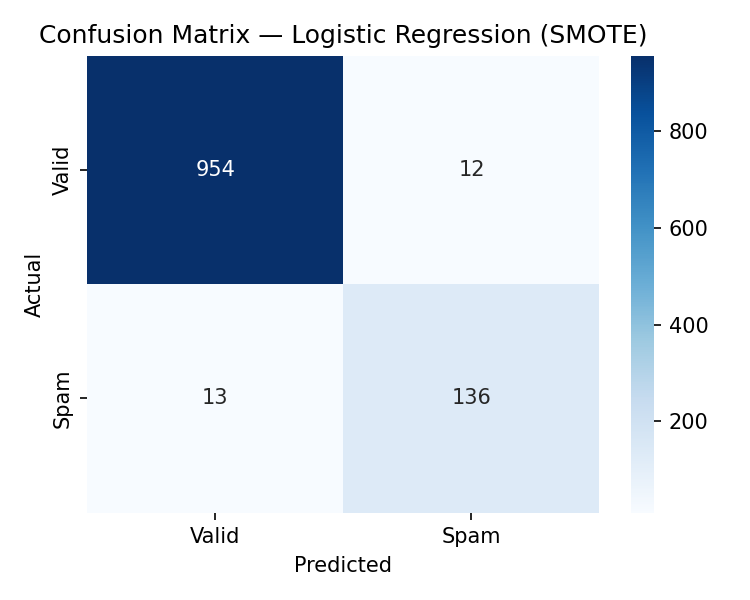

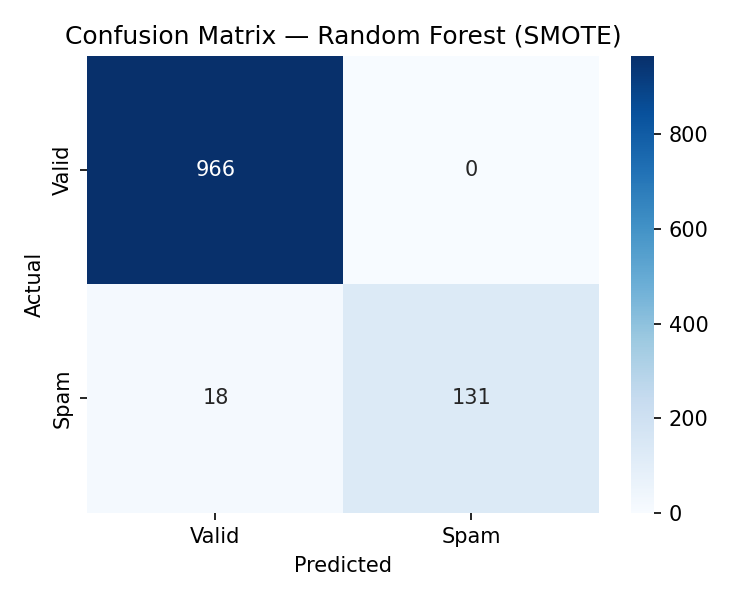

The same four models are retrained on the SMOTE-balanced training set and evaluated on the same original test set.

Confusion Matrix (Post-SMOTE)

Confusion Matrix (Post-SMOTE)

Confusion Matrix (Post-SMOTE)

Confusion Matrix (Post-SMOTE)

All metrics are measured on the held-out 20% test set. F1 scores reflect spam-class performance.

Key finding: SMOTE improved spam recall for 3 of 4 models. Logistic Regression improved the most (+9.2% F1). KNN was the only model to decline slightly (-3.3% F1). Random Forest achieved the highest absolute F1 after SMOTE at 93.6%.

| Model | Acc Before | Acc After | F1 Before | F1 After | F1 Delta |

|---|---|---|---|---|---|

| K-Nearest Neighbors | 97.0% | 95.0% | 87.2% | 83.9% | -3.3% |

| Naive Bayes | 97.0% | 97.0% | 85.5% | 89.0% | +3.5% |

| Logistic Regression | 96.0% | 98.0% | 82.3% | 91.6% | +9.2% |

| Random Forest | 98.0% | 98.0% | 90.8% | 93.6% | +2.7% |